Open Source LLMs: How a 176 Billion Parameter Model is trained (Part 2 of 3)

In Part 1 of this series, I covered why open source LLMs matter and how the BLOOM project was organised, funded, and how its training data was assembled. This post goes deeper, into the architectural decisions that shaped BLOOM and a step-by-step walkthrough of how the model was trained.

Part 3, covering post-training fine-tuning and evaluation, is coming soon.

3. Architecture and Modeling

The Design Constraints

"The design space of possible architectures is immense," as the BLOOM team noted in their paper. Despite this, the team was notably conservative, even considering reusing the architecture of an existing model entirely. Where they did deviate from standard practice, they felt compelled to justify each deviation explicitly. They were aware of promising recent advances in architecture but had doubts about their ability to scale those advances to 176B parameters.

In practice, several key architectural decisions were effectively made for them by their requirements:

Decoder-only model: Since BLOOM would be used for conversational inference, a decoder-only architecture was the natural choice. Encoder-only models are better suited to data analysis tasks, as they have access to full context in both directions. Encoder-decoder models are better suited for transformation tasks such as direct translation. For generating conversational responses, decoder-only wins. The standard training approach for decoder-only models is autoregressive language modeling, where the model predicts the next token given all previous tokens, compares its prediction to the correct value, and adjusts its weights accordingly.

Zero/few-shot capability: The team wanted BLOOM to generalise to tasks it was not explicitly trained for, which constrained their choices around the number of layers and attention heads.

Their final architecture:

How the Model Processes Language: A Step-by-Step Walkthrough

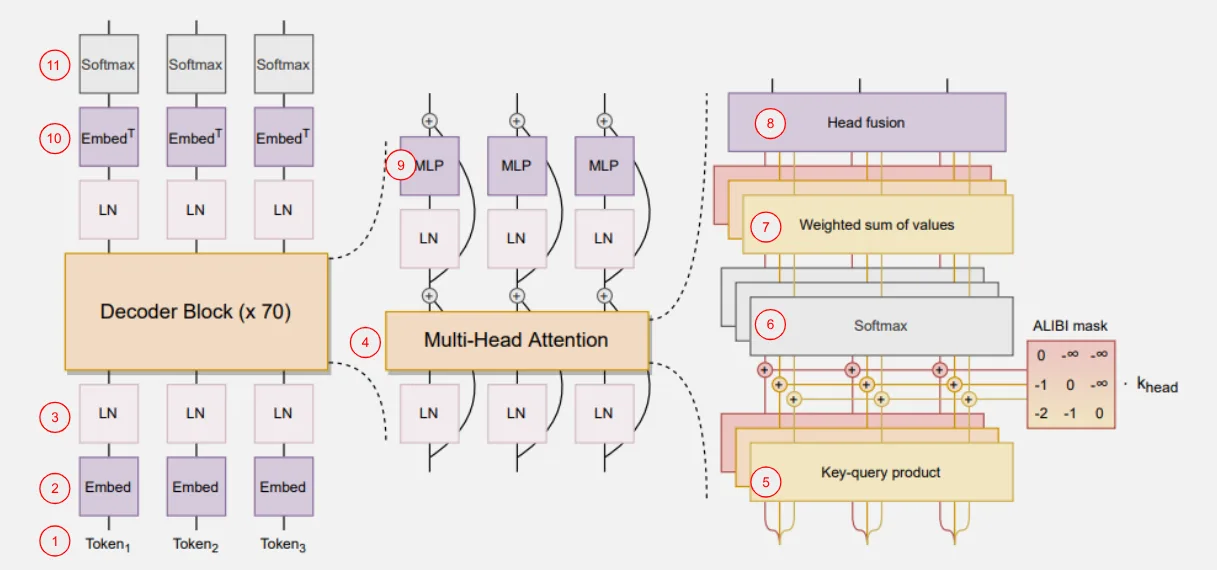

The numbers in brackets below correspond to the circled numbers in the architecture diagram above.

[1] Chunking the input

The training dataset is divided into chunks of 2,048 tokens, roughly 3 pages of text.

[2] Embedding

Each token is mapped to a vector in 4,096-dimensional space. Think of this as placing each word in a vast coordinate system where position encodes meaning. Initial values are assigned randomly and refined throughout training.

[3] Layer Normalization

The vectors are normalised by standardising their means and variance, stabilising the training process.

[4] Multi-Head Attention

This is where relationships between tokens are established, such as between subjects and their verbs, or between adjectives and the nouns they describe. BLOOM uses 16 parallel attention processors, called Attention Heads. The 2,048 token vectors are split into 16 groups of 128 tokens each (roughly one paragraph), with each group passed into every Attention Head.

Different Attention Heads surface different kinds of relationships. Grammatical relationships, physical descriptions, logical dependencies: these emerge from the training process rather than being defined in advance. Two Attention Heads could even surface the same relationship type. Any Attention Head could surface no relationships and be completely redundant.

[5] Query, Key, and Value Vectors

Each Attention Head applies its own learned weights to transform each token vector into three new vectors:

- The Query vector represents what this token is looking for in its context

- The Key vector represents what properties of this token may be relevant to other tokens

- The Value vector carries the actual content to be passed forward

The dot product of each token's Query vector against every other token's Key vector produces a score representing how much attention that pair should pay to each other. An ALiBi mask is applied to weight these scores by position (nearby tokens get more weight) and to prevent a token from attending to itself.

To be clear about what is really happening here: a vector does not "look for" anything in the other vectors. These are mathematical abstractions that emerge through iterative training. As the model is trained on more data, the 4,096-dimensional space is gradually organised so that tokens with similar roles cluster together. A dog token and a cat token will be repositioned closer to each other on the dimensions that encode "animal", and further from a table token, as training surfaces those relationships repeatedly.

[6] Softmax Normalization

The attention scores are passed through a Softmax function, converting them into a probability distribution.

[7 and 8] Combining outputs

The weighted Value vectors are combined back into the original 128-token vectors per Attention Head, then merged again into the full 2,048-token sequence in the Head Fusion block.

[9] Multilayer Perceptrons (MLPs)

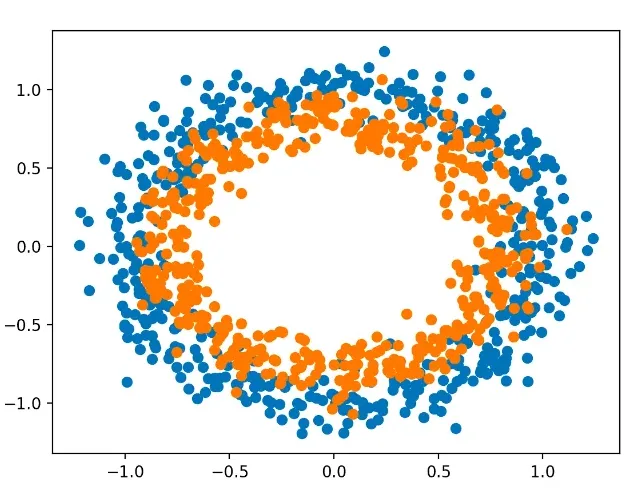

The token vectors are then passed into MLPs. A perceptron finds a separating boundary between vectors in a given space. Sometimes a simple flat boundary is not enough:

There is no straight line that separates the orange dots from the blue ones in the image above. But if you add a dimension and elevate the orange dots, a plane can be drawn. Remove that dimension again and you are left with a circular boundary instead of a straight one. This is what the MLPs accomplish.

BLOOM's MLPs expand the 4,096-dimensional space by a factor of 4 (to 16,384 dimensions), find the non-linear boundaries, then compress back to 4,096 dimensions. This expansion does not add information to the tokens themselves. It adds space between the vectors, making it easier to draw clean boundaries, for example between "river bank" and "commercial bank". The MLPs are surfacing non-linear relationships, and the additional information added to the system is about the relationships between the tokens, codified in weight vectors produced by each MLP.

The entire process from steps 4 to 9 is repeated across 70 decoder blocks. Each pass refines the weight vectors a little further.

[10] Output projection

After 70 blocks, the final token vector in each sequence is projected onto a vector the size of BLOOM's vocabulary (250,000 tokens), with a probability value assigned to each. The highest-probability token is selected as the prediction. That prediction is compared to the actual next token in the training data, a loss value is calculated, and partial derivatives are computed for every weight in the entire system, from the initial embeddings in step 2 through to the output projection here. Every weight is then adjusted to reduce the loss.

This process repeats for every token in every chunk across the entire 1.6TB dataset. For the 176B parameter model, the full dataset was passed through exactly once. Smaller models can afford multiple passes.

There is no logic or code that tells the model to predict which token should follow. The system simply produces a value, then every knob in the configuration is tweaked to make that value more desirable next time the process runs. This is repeated for all 1.6TB of data.

How BLOOM's 176 Billion Parameters Are Distributed

- Embedding layer: ~0.6B parameters (vocabulary size of ~250,000 × embedding dimension of 4,096)

- Attention layers: 70 decoder blocks × 16 heads, each with Query, Key, and Value matrices of size 4,096 × 256 (since 4,096 ÷ 16 = 256), plus a 4,096 × 4,096 output projection per layer

- MLPs: Two weight matrices per block, 4,096 × 16,384 and 16,384 × 4,096

- Remaining: Layer normalisation and final output layer

The 176B total reflects the cumulative effect of these deep, wide layers across 70 decoder blocks.

Key Training Decisions

Single epoch training: The full 350B-token dataset was passed through once, prioritising compute efficiency at this scale.

ALiBi positional encoding: Rather than traditional positional embeddings, BLOOM used Attention with Linear Biases to handle long sequences, improving scalability.

AdamW optimiser with learning rate warmup: A warmup period stabilises early training before the learning rate follows a cosine decay schedule toward convergence.

4. How Does BLOOM Compare to Contemporary and Modern Models?

BLOOM was released in 2022, the same year as GPT-3's wide public adoption and two years before the open source LLM landscape exploded. Looking at where things stand in 2026, the scale of progress is striking.

| Metric | BLOOM (2022) | GPT-3 (2020) | Llama 3.1 405B (2026) | Claude 4.6 (2026) |

|---|---|---|---|---|

| Parameters | 176 billion | 175 billion | 405 billion | ~1–2 trillion (est.) |

| Layers | 70 | 96 | ~120–150 (est.) | ~150–200 (est.) |

| Attention Heads | 16 per layer | 96 per layer | ~128 per layer | ~128–256 per layer (est.) |

| Embedding Dimension | 4,096 | 12,288 | ~8,192–12,288 | ~10,000–16,000 (est.) |

| Vocabulary Size | ~250,000 tokens | ~50,000 tokens | ~128,000–256,000 tokens | ~500,000+ tokens (multilingual) |

| Training Data | ~350B tokens | ~300B tokens | ~10–15 trillion tokens (est.) | ~3–5 trillion tokens (est.) |

| Training Epochs | 1 | 1 | ~1–2 (est.) | ~1–2 (est.) |

| Context Window | 2,048 tokens | 2,048 tokens | 128,000–256,000 tokens | 1,000,000+ tokens |

| Architecture | Decoder-only Transformer | Decoder-only Transformer | Decoder-only Transformer (optimised) | Decoder-only Transformer (highly optimised) |

| Training Hardware | Jean Zay supercomputer | Microsoft Azure supercomputers | Meta's custom AI clusters | Custom AI clusters (Anthropic/Google) |

| Multilingual Focus | Yes (46 languages, 13 programming) | Primarily English | Strong multilingual support | Strong multilingual + multimodal |

| Key Innovations | ALiBi positional encoding | Sparse attention (early version) | Grouped Query Attention, long-context optimisations | Sparse attention, long-context, advanced alignment, multimodal |

A few things stand out. BLOOM and GPT-3 are remarkably similar in scale — both around 175B parameters, both trained on roughly 300–350B tokens, both with 2,048 token context windows. What separates them is not architecture but execution: GPT-3 was a closed, commercially-driven project, while BLOOM was built in the open with full transparency. Neither was particularly multilingual by modern standards, though BLOOM made far more deliberate effort in that direction.

The jump to modern models is dramatic. Llama 3.1 405B was trained on roughly 30–40× more data than BLOOM, with a context window 60–125× larger. Claude 4.6's estimated parameter count suggests a model several times larger again, with a context window that can hold the equivalent of several novels. The core transformer architecture is largely the same — decoder-only, with attention and MLPs — but the scale, the data quality, and the post-training alignment work have transformed what these systems can do.

BLOOM's lasting contribution is not its benchmark performance, which was modest even at the time. It is the transparency. Every architectural decision, every training choice, every evaluation result was published. That made it one of the most studied models in the history of the field, and a foundational reference point for understanding how these systems are built.

Part 3, covering post-training fine-tuning and evaluation (including BLOOM's notably poor benchmark performance and what it tells us), is coming soon.