Open Source LLMs: A Threat to the AI Giants? (Part 1 of 3)

Over 2 million models are now hosted on HuggingFace, the so called "Github of AI", with the second million added in the last year alone. If open source models are viable alternatives to the paid offerings from OpenAI and Anthropic, even if a few months behind in capability, how do we justify those companies' valuations?

Most people's LLM needs are relatively basic. Just as most people choose a mid-range phone over the latest flagship, a capable open source model will be "good enough" for the majority of use cases. The consumer market argument is compelling enough, but the enterprise market is where things get really interesting. Hosting your own open source model has a high upfront cost that most consumers can't justify, but enterprise customers absolutely can, and the privacy and control benefits make it an attractive proposition.

This makes open source LLMs a space worth taking seriously. So I decided to dig in.

What "Open Source" Actually Means for LLMs

Before going further, it's worth clarifying what open source means in this context, because it is not what I expected. In traditional software, open source means the full source code is available: you can read it, modify it, and understand exactly what it is doing. I expected the same for open source LLMs, full transparency on training data, training configuration, and the software used.

The reality is more limited. The vast majority of so-called open source models publish only the model weights, essentially the "executable" that you can run yourself, with no visibility into how it was built. You can use it but you cannot truly understand it.

There is, however, one notable exception: BLOOM, published in July 2022 by a volunteer collective organised by HuggingFace. BLOOM made everything public, from the cost of training to the post-training evaluations. It is comparable in scale to GPT-3 (176 billion parameters versus GPT-3's 178 billion), released just 8 months after GPT-3, making it an ideal candidate for a deep dive into how a large language model is actually built.

This is Part 1 of a 3-part series. Most of the material here comes from BLOOM's HuggingFace page and its academic paper. Part 1 covers how the project was organised and how the training data was assembled. Part 2 covers the model architecture. Part 3 covers how the model is made ready for use post-training.

1. Organization and Process

Budget and Financing

In February 2021, the BLOOM project was awarded a compute grant on the Jean Zay supercomputer (currently ranked among the top 50 most powerful supercomputers globally), providing 5 million compute hours for training, valued at approximately €3 million. With the budget fixed, the team could determine the upper limits of their model and begin designing accordingly.

The team doesn't publish detailed timelines, but it appears roughly a year of planning, preparation, and smaller-scale testing preceded the start of full 176B parameter model training in February 2022.

Teams and People

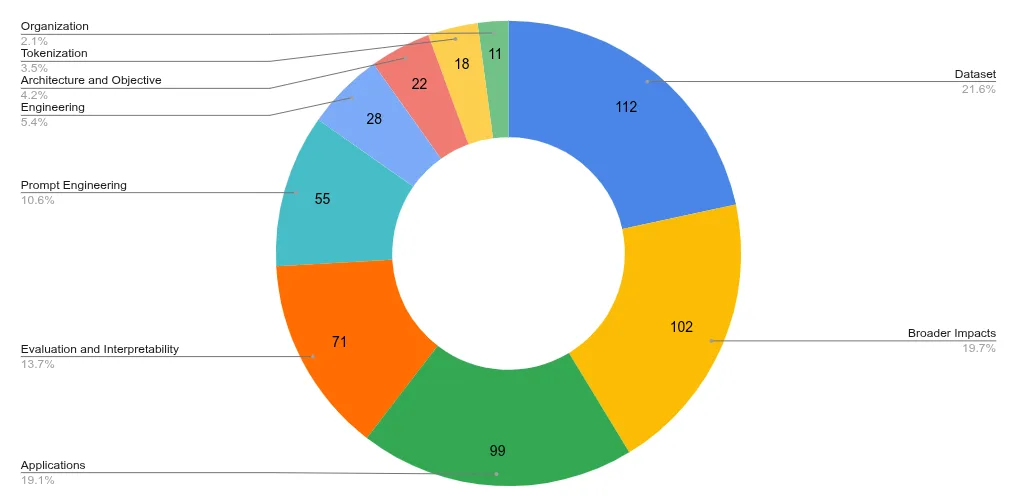

1,200 people registered as participants in the project, though the academic paper lists 518 by name, suggesting many registered without actively contributing. Here is how the active participants were distributed across teams:

As the chart shows, the largest share of effort went into building the dataset, assessing the model's broader impacts (likely over-represented given the transparency goals of the project), and building applications. The team purposes can be summarised as follows:

- Dataset: Collect, clean, balance, and ensure ethical use of training data

- Tokenization: Design and optimise the tokenizer for multilingual text

- Prompt Engineering: Craft prompts and fine-tune the model for instruction-following

- Architecture and Objective: Design the model's structure and define training goals

- Engineering: Build and optimise the training and inference infrastructure

- Evaluation and Interpretability: Test performance, fairness, and interpretability

- Broader Impacts: Assess ethical, societal, and environmental impacts

- Applications: Develop use cases, demos, and real-world applications

- Organization: Manage collaboration, resources, and documentation across teams

Execution Process

The project began with a series of workshops to sharpen objectives. Plans to incorporate under-represented languages such as Urdu and Vietnamese were confirmed at this stage. Importantly, the team also defined their evaluation suite of tasks and prompts before the model was built, specifically to avoid the pitfall of inadvertently designing evaluations around the model's strengths once it became familiar to the evaluators.

With objectives set, the team assembled the training dataset and defined the model architecture. This allowed engineering teams to configure hardware and supporting infrastructure.

Training proceeded in stages: a 560M parameter model first, followed by 1.1B, 1.7B, 3B, 7.1B, and finally the full 176B parameter model. The smaller models served primarily to stress-test the training configuration and surface any issues early, though they also have value as lighter-weight models for specific fine-tuning tasks.

Once training was complete, the applications team configured the models for inference. Evaluation followed, using standardised test suites for translation, summarisation, and other capabilities. Adjustments to training configuration and data can be made relatively easily on the smaller models but not on the 176B model. Once that is trained, there is no going back.

2. Data

The ROOTS Dataset

The topic of collating training data is fascinating and probably deserves its own blog post. For BLOOM, the team created their own dataset named ROOTS, containing 1.6TB of data across at least 46 natural languages and 13 programming languages. The "at least" is deliberate: Russian was not included in the dataset, yet BLOOM was subsequently found to be capable of conversing in Russian, a testament to how difficult it is to fully control a dataset of this scale.

ROOTS is composed of:

- Common Crawl (~38% of total data): An enormous, freely available dataset scraped from the internet over 19 years, augmented monthly. It is noisy and legally ambiguous, but vast. Both Anthropic and OpenAI have made donations to the Common Crawl organisation, though neither is transparent about how much they use it.

- GitHub code (~15% of total data)

- Curated language-specific sources (~47%): For example, archives of Le Monde articles for French, the Masader repository for Arabic. Some sources are creative, such as subtitle files for more obscure languages.

Every piece of data was stored with a metadata label traceable to its source. The raw data then went through a substantial deduplication effort (repeated Wikipedia articles introduce bias for example), data cleansing (removal of credit card numbers, national ID numbers, phone numbers, and similar personally identifiable information), and quality control to remove non-human-generated content such as SEO data and spam.

The final language distribution of the ROOTS dataset:

A Note on Tokenization

The tokenization process was considerably more complex than I expected. Splitting text on spaces and punctuation is straightforward enough, but tokenizing individual words in a way that works well across dozens of languages is not. Off-the-shelf tokenizers are generally designed for English, which is a problem when multilingual support is a primary goal.

The issue matters because the model learns relationships between tokens, not words. Poor tokenization produces poor token relationships. The BLOOM team benchmarked their multilingual tokenizer against single-language specialist tokenizers, targeting no more than a 10% difference in tokens per word. For Chinese, for example, their tokenizer produced 1.58 tokens per word versus 1.50 for a specialist Chinese tokenizer, a 5% difference and within the acceptable range.

A Note on Vocabulary

Tokenization strategy directly determines the model's vocabulary, the set of distinct tokens the model works with. A richer vocabulary is generally better, provided the tokenization is meaningful. Over-aggressive tokenization produces a large vocabulary but with weaker signal in each token, an important tradeoff in multilingual model design.

Part 2 covers the architectural decisions that shaped BLOOM and a step-by-step walkthrough of how the model processes language.