Book Review: Why Machines Learn

In his public talks on how large language models (LLMs) work, Anil Ananthaswamy starts by asking the audience to put up their hand if they think LLMs are capable of reasoning. The vast majority of people raise their hands. He asks the same question again at the end of the talk, and typically only about half the hands go up.

I went through a very similar journey while reading Why Machines Learn. If you want the veil of mystery around modern AI to be lifted, without the subject being dumbed down, this is the book I would recommend.

Making the Maths Intuitive

What I loved most is that Ananthaswamy explains machine-learning concepts through the mathematics that drives them, but in a way that is genuinely intuitive. He walks through how data is represented as vectors, how probability distributions are estimated, how data gets embedded in vector spaces, and how matrices are manipulated to transform those vectors. From there he builds up to the universal approximation theorem, gradient descent, backpropagation, and self-supervised learning.

Understanding the maths made everything feel far less like magic and far more like engineering. Since reading the book, I have started noticing machine learning everywhere in daily life: how my fitness watch estimates sleep quality, how my phone's swipe keyboard predicts words, how recommendation systems adapt over time. You begin to see these as variations on the same underlying ideas.

Overfitting and the Bias-Variance Trade-off

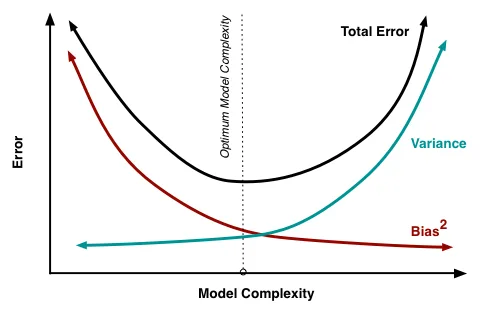

One highlight for me was his explanation of overfitting and the bias-variance trade-off. He uses a simple example: imagine training a model to distinguish between couches and chairs, but all of your training images of chairs happen to be wooden or metal. If your model achieves 100% accuracy on that training set, it may actually perform worse in the real world, because it has learned that "chair" means "wood or metal," and will fail to recognize plastic chairs entirely. Counter-intuitively, stopping training earlier, accepting less than perfect training accuracy, can produce better generalization.

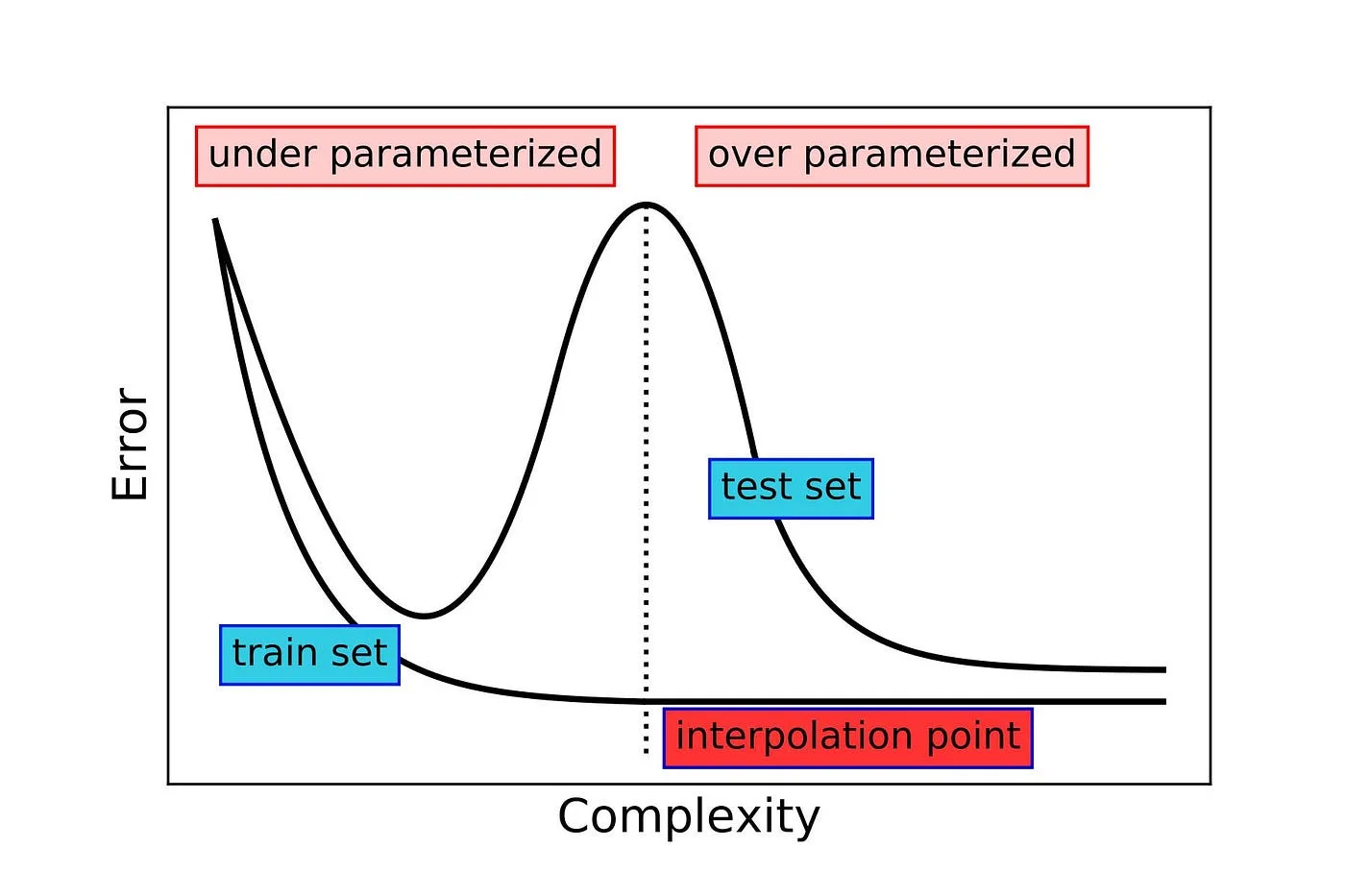

Then comes a fascinating twist: empirically, if you keep training large modern models past this point and massively over-parameterize them, the overfitting problem can disappear again and performance starts improving. This phenomenon, known as double descent, was discovered experimentally first, and only later became the subject of theoretical investigation. It really stood out to me because it flips the usual scientific order: observation first, explanation later. It is a reminder of how much of modern AI is still not fully understood, even by the people building it.

Language and Cognition

Another part I found particularly thought-provoking was the discussion of language acquisition and cognition, especially in relation to Chomsky's theories. Chomsky argued that language is too complex to be learned purely from data, and that the brain must contain a built-in language acquisition device with grammatical switches that are flipped depending on the language environment (for example, whether sentences follow subject-verb-object or subject-object-verb order).

The ability of LLMs to learn generalized concepts, that a plastic chair is still a chair, or that verbs serve similar functions across very different sentence structures, is forcing us to rethink what can be learned from data alone. In that sense, these models are not just engineering achievements; they are becoming experimental tools for understanding human cognition itself.

A Perfect Pairing with AI Assistants

One unexpected bonus of reading this book in 2025 is how well it pairs with tools like ChatGPT or Claude. Being able to pause, ask for clarification, or explore a concept more deeply made the learning process far more engaging. It honestly made me a bit envious of today's students, and very glad that my own kids will grow up with access to these kinds of learning accelerators.

Future Editions

The book was published in 2024, and the content on LLMs appears mainly in the epilogue and afterword. They are excellent, but noticeably denser and more compressed than the rest of the book, and you can tell they were added late in the publishing process to keep up with how fast the field is moving. I would love to see those sections expanded in future editions.

Final Thoughts

Overall, Why Machines Learn is one of the best explanations I have read of what is actually happening inside modern AI systems, and just as importantly, what is still mysterious and unresolved. You may finish the book less convinced that today's models are "reasoning" in any human sense, but far more impressed by what emerges from relatively simple mathematical principles scaled to extraordinary levels.